Institute of Formal and Applied Linguistics

Charles University, Czech Republic

Faculty of Mathematics and Physics

Homepage:

License:

MT-ComparEval

Try it online before installing on your server

- http://wmt.ufal.cz: all systems from the WMT 2014–2016

- http://mt-compareval.ufal.cz: upload and analyze your translation

Description

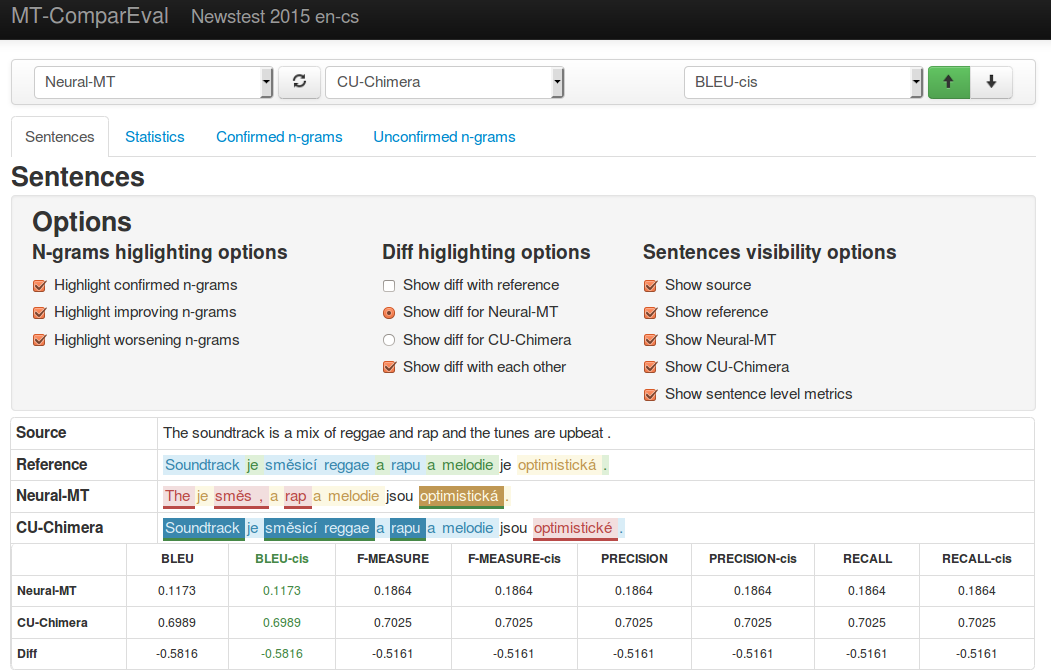

MT-ComparEval is a tool for comparison and evaluation of machine translations. It allows users to compare translations according to several criteria, such as:

- automatic metrics of machine translation quality computed either on whole documents or single sentences

- quality comparison of single sentence translation by highlighting confirmed, improving and worsening n-grams

- summaries of the most improving and worsening n-grams for the whole document.

MT-ComparEval also plots a chart with absolute differences of metrics computed on single sentences and a chart with values obtained from paired bootstrap resampling.

Screenshot:

How to cite

@article{ KlejchEtAl:2015,

journal = {The Prague Bulletin of Mathematical Linguistics},

title = {{MT}-ComparEval: Graphical evaluation interface for Machine Translation development},

author = {Ond{\v{r}}ej Klejch and Eleftherios Avramidis and Aljoscha Burchardt and Martin Popel},

year = {2015},

number = {104},

pages = {63--74},

issn = {0032-6585},

}